Summary

Daily on Roblox, 65.5 million customers interact with hundreds of thousands of experiences, totaling 14.0 billion hours quarterly. This interplay generates a petabyte-scale information lake, which is enriched for analytics and machine studying (ML) functions. It’s resource-intensive to hitch reality and dimension tables in our information lake, so to optimize this and scale back information shuffling, we embraced Realized Bloom Filters [1]—sensible information constructions utilizing ML. By predicting presence, these filters significantly trim be a part of information, enhancing effectivity and decreasing prices. Alongside the best way, we additionally improved our mannequin architectures and demonstrated the substantial advantages they provide for decreasing reminiscence and CPU hours for processing, in addition to rising operational stability.

Introduction

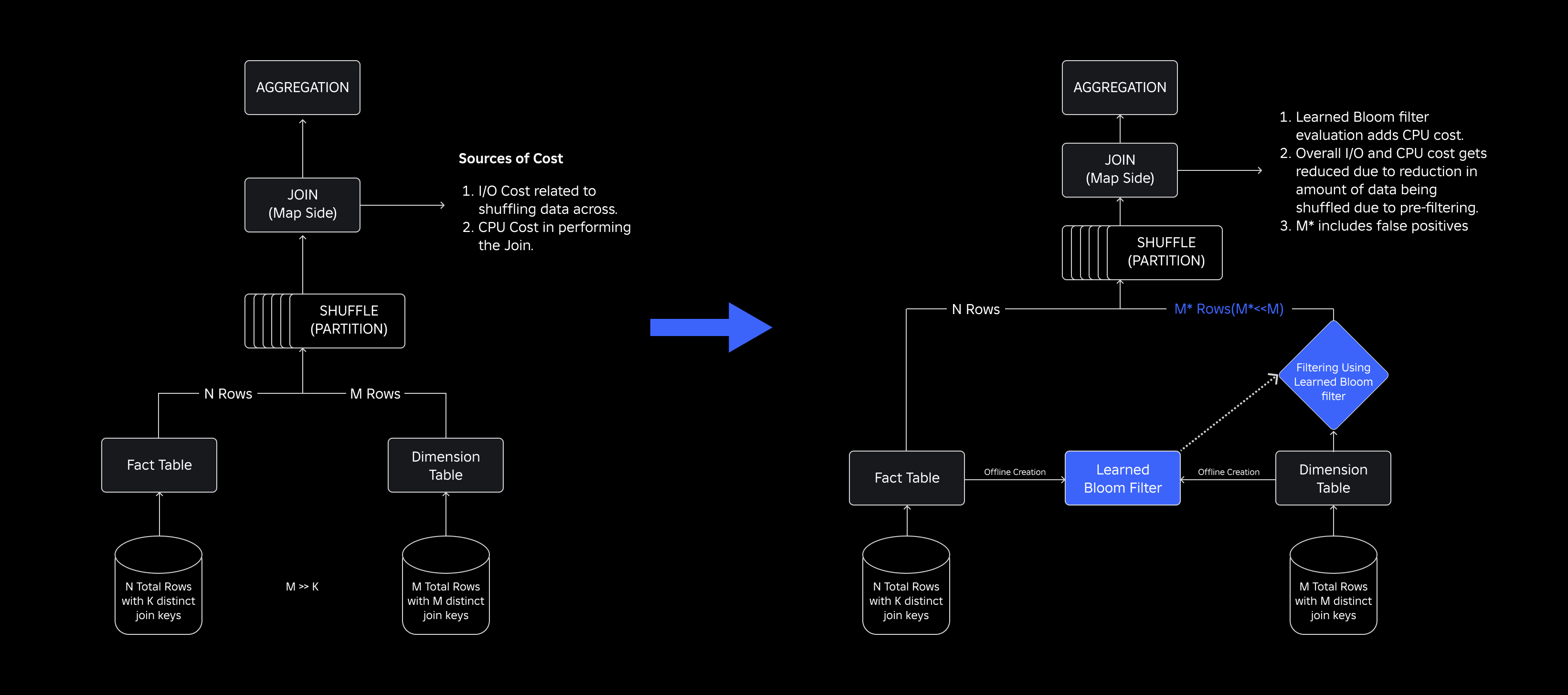

In our information lake, reality tables and information cubes are temporally partitioned for environment friendly entry, whereas dimension tables lack such partitions, and becoming a member of them with reality tables throughout updates is resource-intensive. The important thing house of the be a part of is pushed by the temporal partition of the actual fact desk being joined. The dimension entities current in that temporal partition are a small subset of these current in your complete dimension dataset. Because of this, the vast majority of the shuffled dimension information in these joins is ultimately discarded. To optimize this course of and scale back pointless shuffling, we thought-about utilizing Bloom Filters on distinct be a part of keys however confronted filter dimension and reminiscence footprint points.

To deal with them, we explored Realized Bloom Filters, an ML-based answer that reduces Bloom Filter dimension whereas sustaining low false optimistic charges. This innovation enhances the effectivity of be a part of operations by decreasing computational prices and enhancing system stability. The next schematic illustrates the standard and optimized be a part of processes in our distributed computing setting.

Enhancing Be a part of Effectivity with Realized Bloom Filters

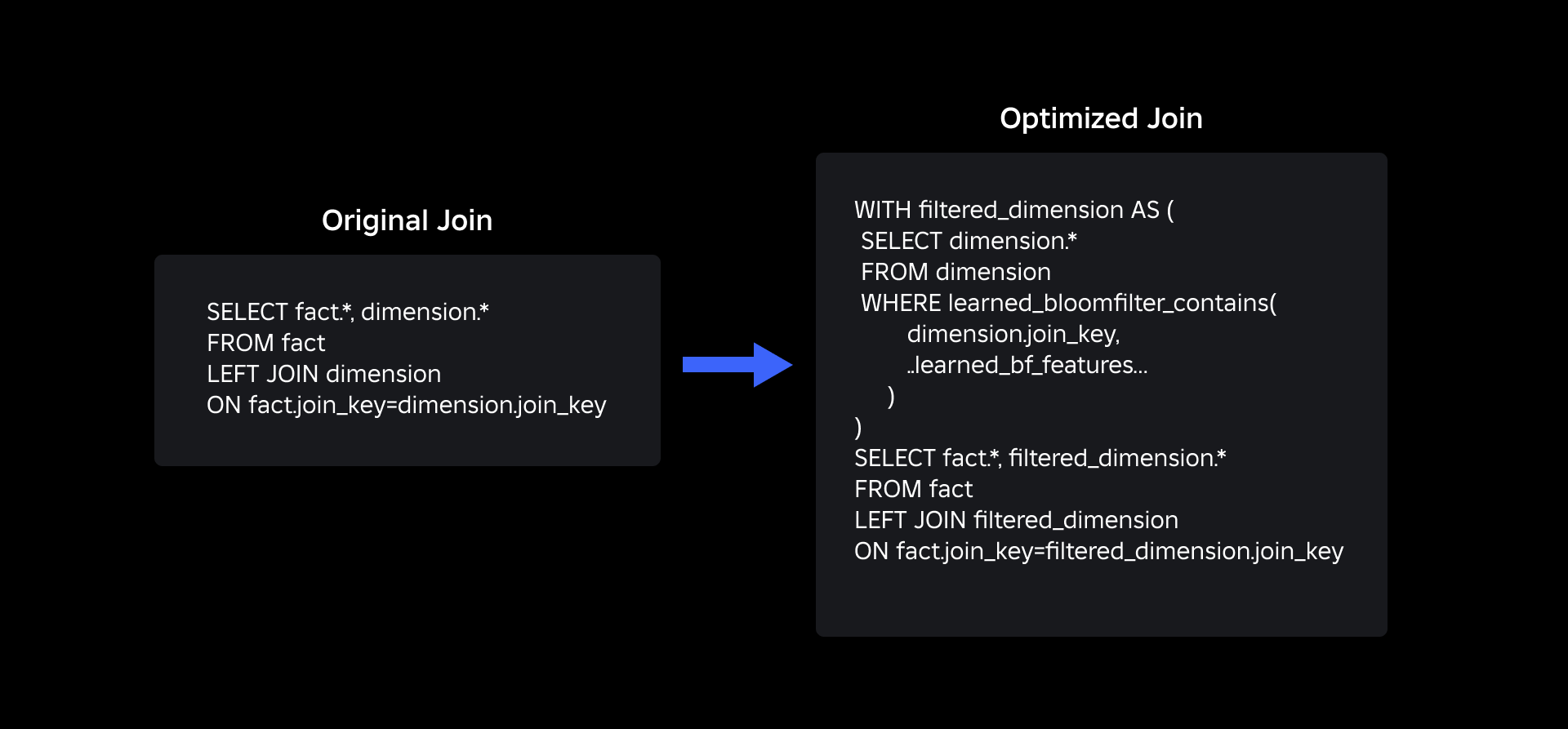

To optimize the be a part of between reality and dimension tables, we adopted the Realized Bloom Filter implementation. We constructed an index from the keys current within the reality desk and subsequently deployed the index to pre-filter dimension information earlier than the be a part of operation.

Evolution from Conventional Bloom Filters to Realized Bloom Filters

Whereas a conventional Bloom Filter is environment friendly, it provides 15-25% of further reminiscence per employee node needing to load it to hit our desired false optimistic charge. However by harnessing Realized Bloom Filters, we achieved a significantly diminished index dimension whereas sustaining the identical false optimistic charge. That is due to the transformation of the Bloom Filter right into a binary classification downside. Constructive labels point out the presence of values within the index, whereas unfavourable labels imply they’re absent.

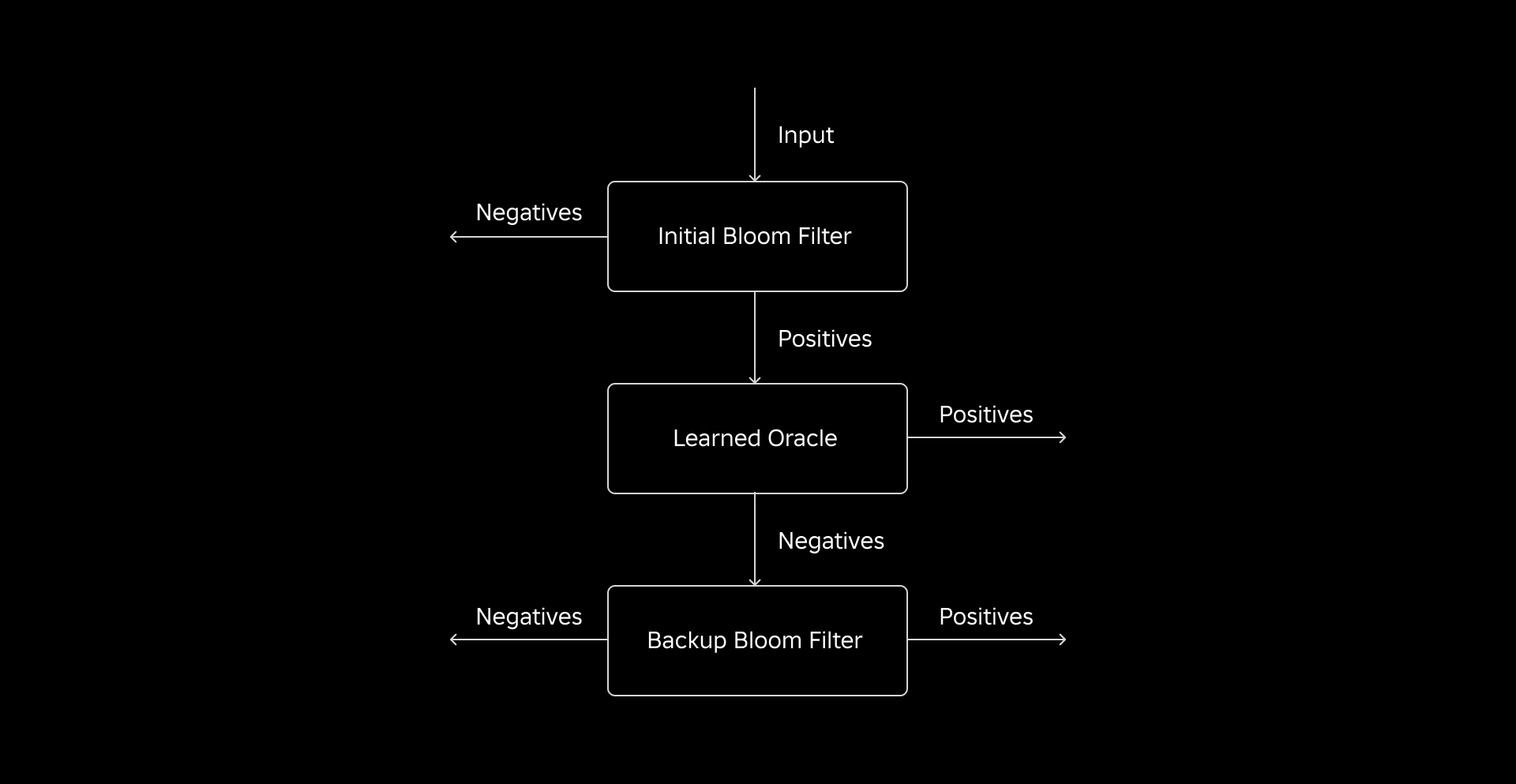

The introduction of an ML mannequin facilitates the preliminary examine for values, adopted by a backup Bloom Filter for eliminating false negatives. The diminished dimension stems from the mannequin’s compressed illustration and diminished variety of keys required by the backup Bloom Filter. This distinguishes it from the standard Bloom Filter method.

As a part of this work, we established two metrics for evaluating our Realized Bloom Filter method: the index’s ultimate serialized object dimension and CPU consumption in the course of the execution of be a part of queries.

Navigating Implementation Challenges

Our preliminary problem was addressing a extremely biased coaching dataset with few dimension desk keys within the reality desk. In doing so, we noticed an overlap of roughly one-in-three keys between the tables. To deal with this, we leveraged the Sandwich Realized Bloom Filter method [2]. This integrates an preliminary conventional Bloom Filter to rebalance the dataset distribution by eradicating the vast majority of keys that had been lacking from the actual fact desk, successfully eliminating unfavourable samples from the dataset. Subsequently, solely the keys included within the preliminary Bloom Filter, together with the false positives, had been forwarded to the ML mannequin, sometimes called the “discovered oracle.” This method resulted in a well-balanced coaching dataset for the discovered oracle, overcoming the bias situation successfully.

The second problem centered on mannequin structure and coaching options. Not like the basic downside of phishing URLs [1], our be a part of keys (which typically are distinctive identifiers for customers/experiences) weren’t inherently informative. This led us to discover dimension attributes as potential mannequin options that may assist predict if a dimension entity is current within the reality desk. For instance, think about a reality desk that incorporates consumer session info for experiences in a selected language. The geographic location or the language desire attribute of the consumer dimension could be good indicators of whether or not a person consumer is current within the reality desk or not.

The third problem—inference latency—required fashions that each minimized false negatives and supplied fast responses. A gradient-boosted tree mannequin was the optimum alternative for these key metrics, and we pruned its function set to stability precision and pace.

Our up to date be a part of question utilizing discovered Bloom Filters is as proven beneath:

Outcomes

Listed here are the outcomes of our experiments with Realized Bloom filters in our information lake. We built-in them into 5 manufacturing workloads, every of which possessed totally different information traits. Probably the most computationally costly a part of these workloads is the be a part of between a reality desk and a dimension desk. The important thing house of the actual fact tables is roughly 30% of the dimension desk. To start with, we talk about how the Realized Bloom Filter outperformed conventional Bloom Filters by way of ultimate serialized object dimension. Subsequent, we present efficiency enhancements that we noticed by integrating Realized Bloom Filters into our workload processing pipelines.

Realized Bloom Filter Dimension Comparability

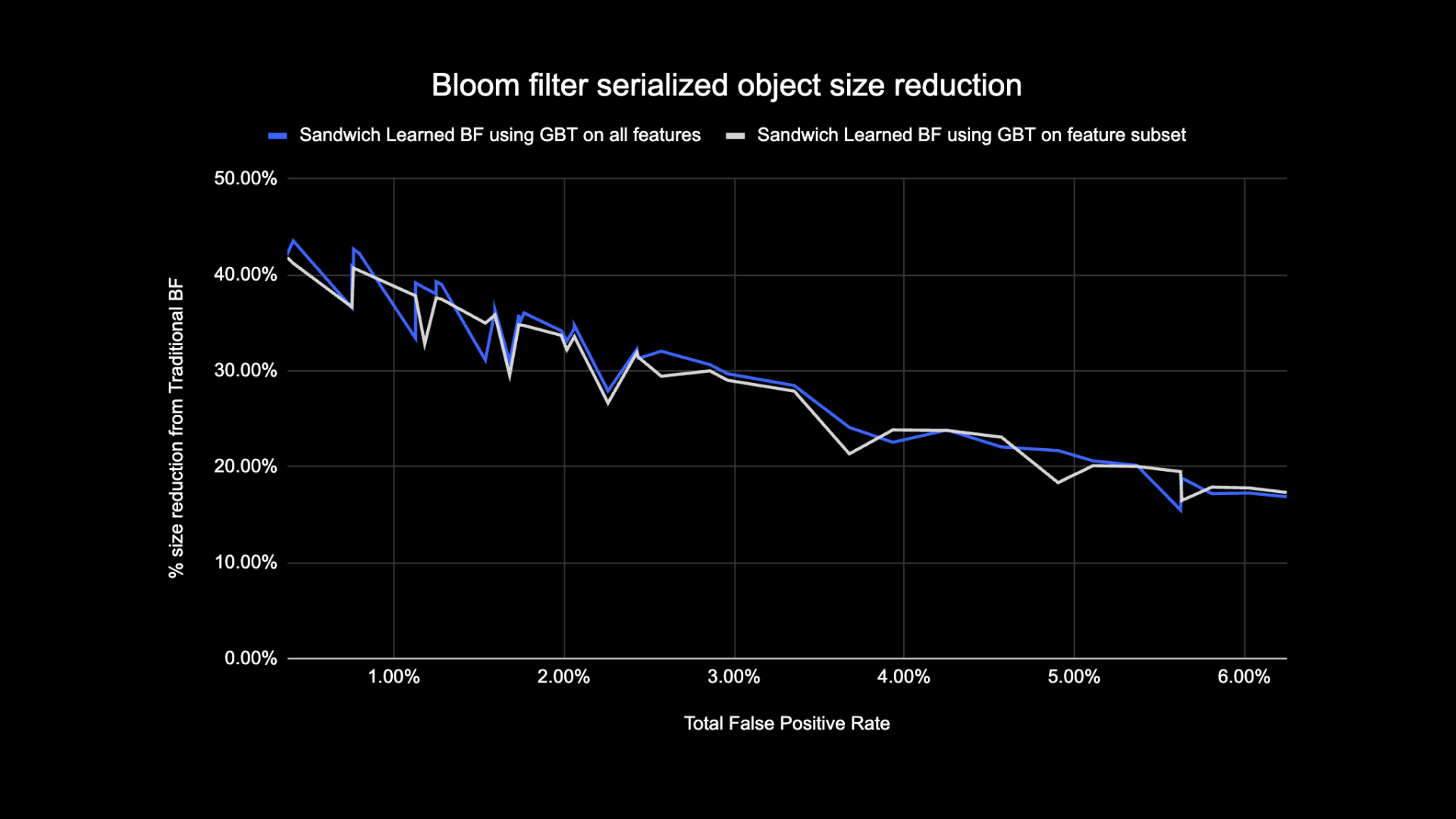

As proven beneath, when a given false optimistic charge, the 2 variants of the discovered Bloom Filter enhance complete object dimension by between 17-42% when in comparison with conventional Bloom Filters.

As well as, by utilizing a smaller subset of options in our gradient boosted tree based mostly mannequin, we misplaced solely a small proportion of optimization whereas making inference sooner.

Realized Bloom Filter Utilization Outcomes

On this part, we evaluate the efficiency of Bloom Filter-based joins to that of normal joins throughout a number of metrics.

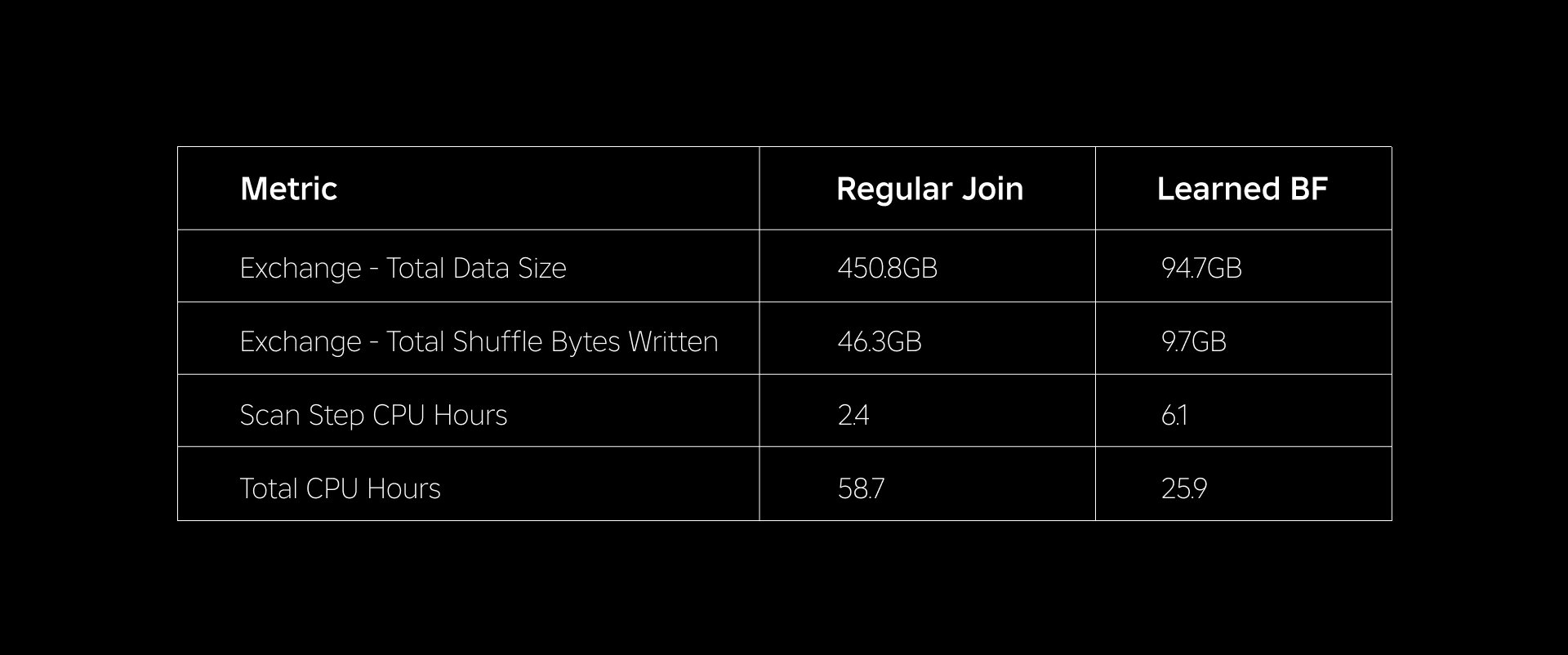

The desk beneath compares the efficiency of workloads with and with out the usage of Realized Bloom Filters. A Realized Bloom Filter with 1% complete false optimistic chance demonstrates the comparability beneath whereas sustaining the identical cluster configuration for each be a part of sorts.

First, we discovered that Bloom Filter implementation outperformed the common be a part of by as a lot as 60% in CPU hours. We noticed a rise in CPU utilization of the scan step for the Realized Bloom Filter method as a result of further compute spent in evaluating the Bloom Filter. Nonetheless, the prefiltering completed on this step diminished the dimensions of knowledge being shuffled, which helped scale back the CPU utilized by the downstream steps, thus decreasing the whole CPU hours.

Second, Realized Bloom Filters have about 80% much less complete information dimension and about 80% much less complete shuffle bytes written than an everyday be a part of. This results in extra steady be a part of efficiency as mentioned beneath.

We additionally noticed diminished useful resource utilization in our different manufacturing workloads underneath experimentation. Over a interval of two weeks throughout all 5 workloads, the Realized Bloom Filter method generated a median day by day price financial savings of 25%, which additionally accounts for mannequin coaching and index creation.

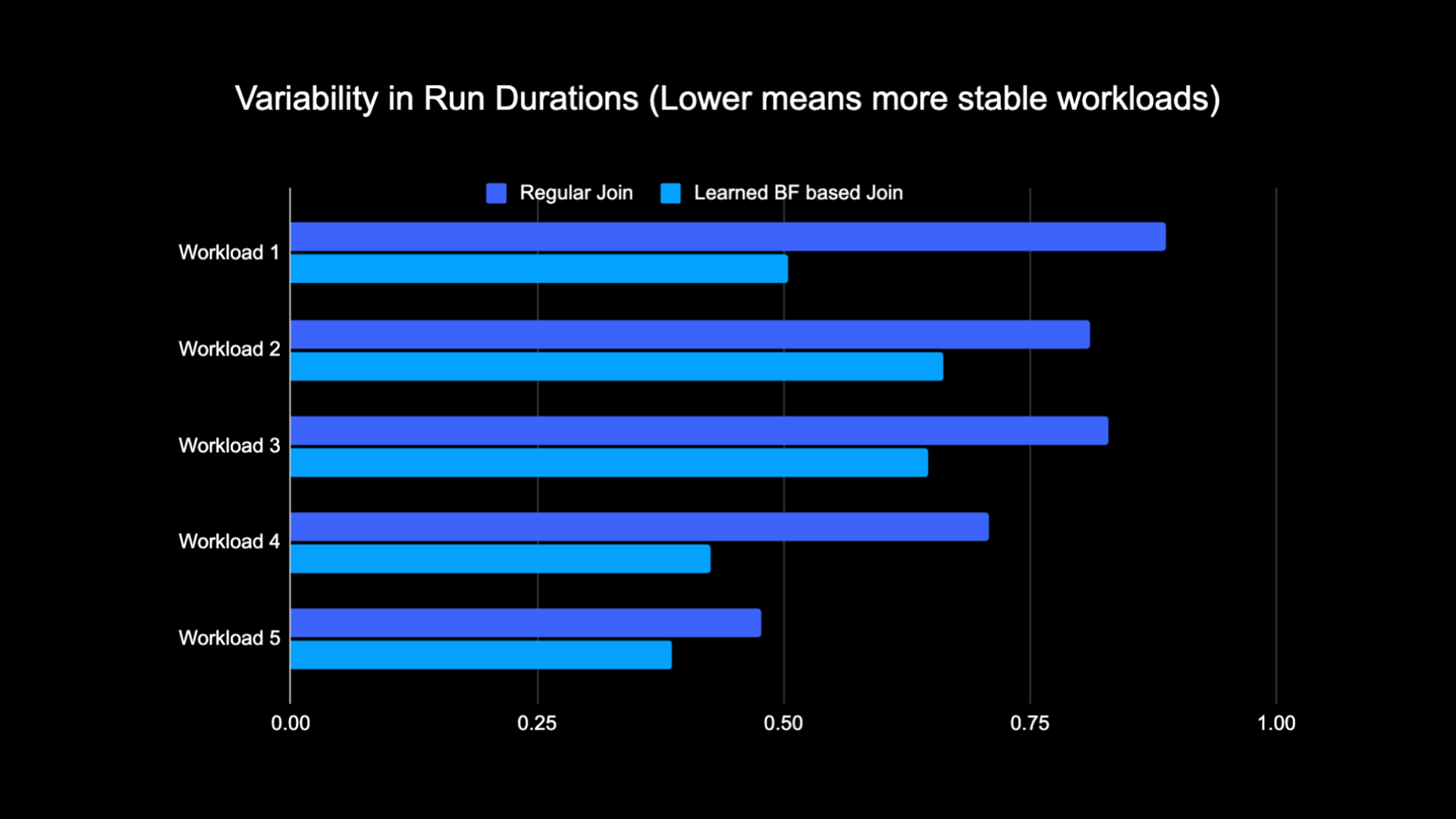

As a result of diminished quantity of knowledge shuffled whereas performing the be a part of, we had been capable of considerably scale back the operational prices of our analytics pipeline whereas additionally making it extra steady.The next chart reveals variability (utilizing a coefficient of variation) in run durations (wall clock time) for an everyday be a part of workload and a Realized Bloom Filter based mostly workload over a two-week interval for the 5 workloads we experimented with. The runs utilizing Realized Bloom Filters had been extra steady—extra constant in period—which opens up the potential for transferring them to cheaper transient unreliable compute sources.

References

[1] T. Kraska, A. Beutel, E. H. Chi, J. Dean, and N. Polyzotis. The Case for Realized Index Constructions. https://arxiv.org/abs/1712.01208, 2017.

[2] M. Mitzenmacher. Optimizing Realized Bloom Filters by Sandwiching.

https://arxiv.org/abs/1803.01474, 2018.

¹As of three months ended June 30, 2023

²As of three months ended June 30, 2023